Life Alert's Citation Architecture in AI Search: Why Visibility Did Not Become Recommendation

April 2026 analysis of Life Alert across 1,026 prompts, 10 high-intent clusters, and 6 AI platforms. The core finding: third-party editorial and trust source...

In this article

- 01At a Glance

- 02Executive Summary

- 03What Citation Architecture Means in This Case Study

- 04The Baseline Pattern

- 05The Source Layer That Taught AI How to Talk About Life Alert

- 06Editorial Review Share Was High in the Clusters That Mattered Most

- 07Best Medical Alert Systems: The Clearest Citation Failure

- 08Comparisons: The Brand Entered the Conversation, But Not the Recommendation Layer

- 09Pricing: The Biggest Demand Pool Was Also a Citation-Narrative Problem

- 10Reviews: Sparse Owned Support in a Decision-Stage Cluster

- 11Alternatives: Full Presence, Almost No Citation Power

- 12Compare Medical Alert Systems: Some Support, No Endorsement

- 13How to Choose: Branded Support Without Organic Recommendation Strength

- 14Free Medical Alert Systems: Affordability and Evidence Layers Collided

- 15Legitimacy and Trust: Community and Trust Domains Defined the Answer Set

- 16Owned-Domain Citations Were Real, But Too Narrow to Change the Outcome

- 17Cross-Platform Pattern: A Shared Evidence Problem, Not One Broken Platform

- 18Directional Competitor Pressure

- 19What This Case Study Shows

- 20Methodology

- 21Limitations

- 22Frequently Asked Questions

- 23Final Thoughts

Independent market analysis. Not client work.

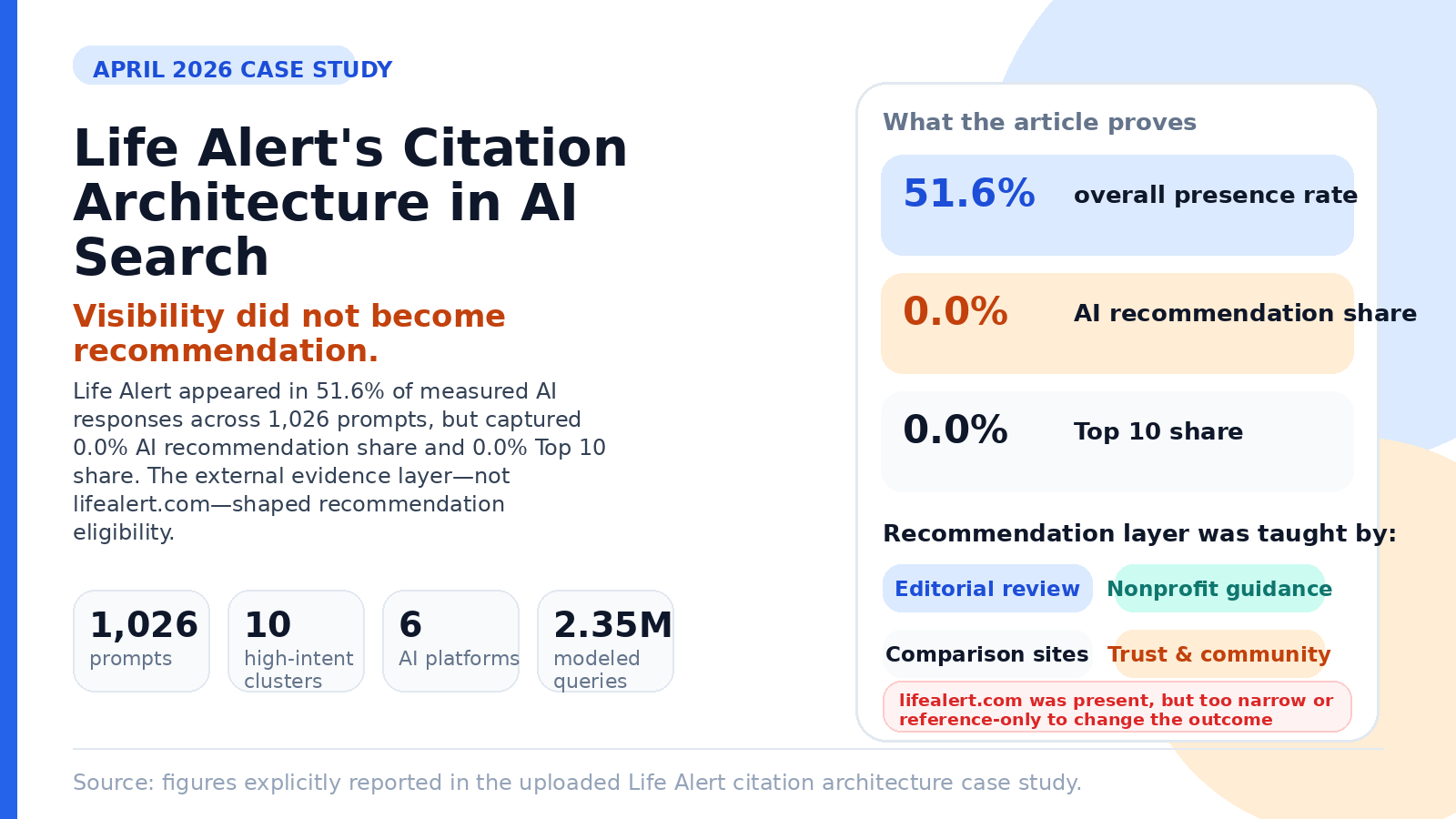

At a Glance

| Field | Value |

|---|---|

| Company analyzed | Life Alert |

| Vertical | Medical Alert Systems / Personal Emergency Response Systems (PERS) |

| Report month | April 2026 |

| Primary evidence set | 1,026 prompts |

| High-intent prompt clusters | 10 |

| Platforms in scope | 6 |

| Total modeled cluster query volume | 2,351,993 |

| Overall presence rate | 51.6% |

| AI recommendation share | 0.0% |

| Top 1 / Top 3 / Top 10 share | 0.0% / 0.0% / 0.0% |

| Core interpretation | Life Alert was visible, but not recommendation-qualified |

| Central citation finding | External editorial, nonprofit, review, and trust domains shaped the recommendation layer more than Life Alert's own domain |

Executive Summary

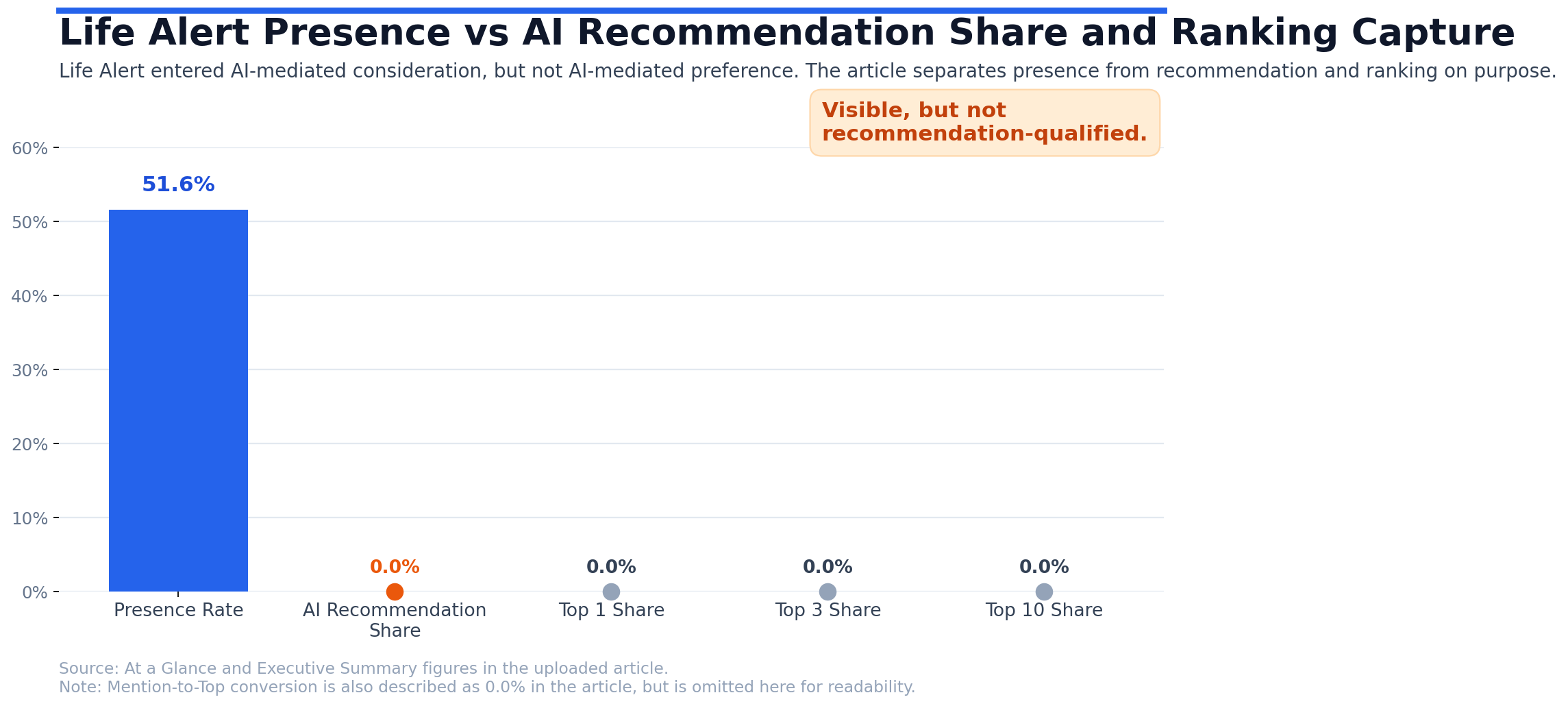

Life Alert did not have a simple visibility problem in AI search. It had a citation architecture problem.

In the April 2026 LLM Authority Index baseline, Life Alert appeared in 51.6% of evaluated AI responses across six major AI discovery environments. That is meaningful visibility. But none of that visibility converted into measurable recommendation strength. AI recommendation share was 0.0%. Top 1, Top 3, and Top 10 capture were each 0.0%. Mention-to-Top conversion was also 0.0%.

The reason mattered more than the score. Across the highest-intent buying clusters, AI systems were not primarily relying on Life Alert's own domain to decide whether the brand belonged in recommendation sets. They were relying on third-party editorial, nonprofit, comparison, review, and trust-oriented domains. Those domains were setting the category frame. In too many cases, Life Alert's own site was absent, thin, brand-specific, or cited for factual reference rather than persuasive endorsement.

That is the core case-study lesson. In AI-mediated buying journeys, a brand can be known and still be unqualified for recommendation if the surrounding evidence layer is controlled by other sources.

What Citation Architecture Means in This Case Study

For this analysis, citation architecture means the network of owned and third-party sources that AI systems rely on when they compare, explain, shortlist, and recommend options.

That includes:

- official vendor domains

- editorial review sites

- nonprofit or trust-oriented guides

- comparison publishers

- marketplace and directory pages

- forums and community sources

- trust and reputation environments

This matters because AI systems do not build buying guidance from owned pages alone. They synthesize what the broader web is teaching them. If the external evidence layer is narrow, unfavorable, or dominated by other brands, visibility alone will not produce recommendation outcomes.

The Baseline Pattern

The baseline pattern was consistent across all ten high-intent clusters:

- Life Alert was present

- Life Alert was not advanced into recommendation sets

- Life Alert did not achieve measurable ranking capture

- Life Alert's own domain was usually weaker than the external source layer that shaped the answer

This was most commercially important in the largest demand pools:

- Pricing: 1,137,893 modeled queries

- Free Medical Alert Systems: 343,722

- Best Medical Alert Systems: 251,041

- Alternatives: 207,612

- How to Choose a Medical Alert System: 178,448

Together, those clusters represented the overwhelming majority of modeled demand in the packet.

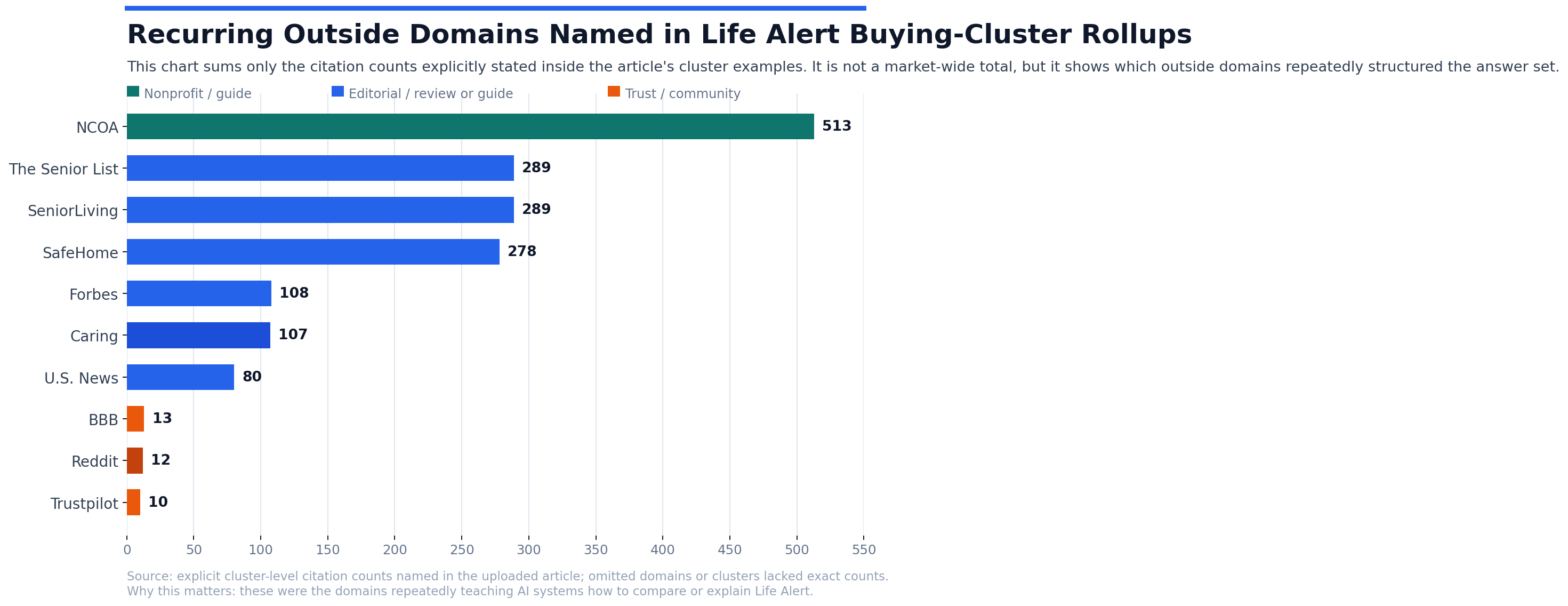

The Source Layer That Taught AI How to Talk About Life Alert

Across clusters, the recommendation layer was shaped by a recurring set of outside domains. The most repeatedly cited domains included:

- ncoa.org

- forbes.com

- seniorliving.org

- safehome.org

- theseniorlist.com

- realestate.usnews.com

- safewise.com

- retirementliving.com

- assistedliving.org

- caring.com

The baseline also documented strong trust-oriented influence from:

- bbb.org

- reddit.com

- trustpilot.com

This is the key architecture story: AI systems were not improvising Life Alert's position from thin air. They were reproducing a structured external evidence layer that already existed on the public web.

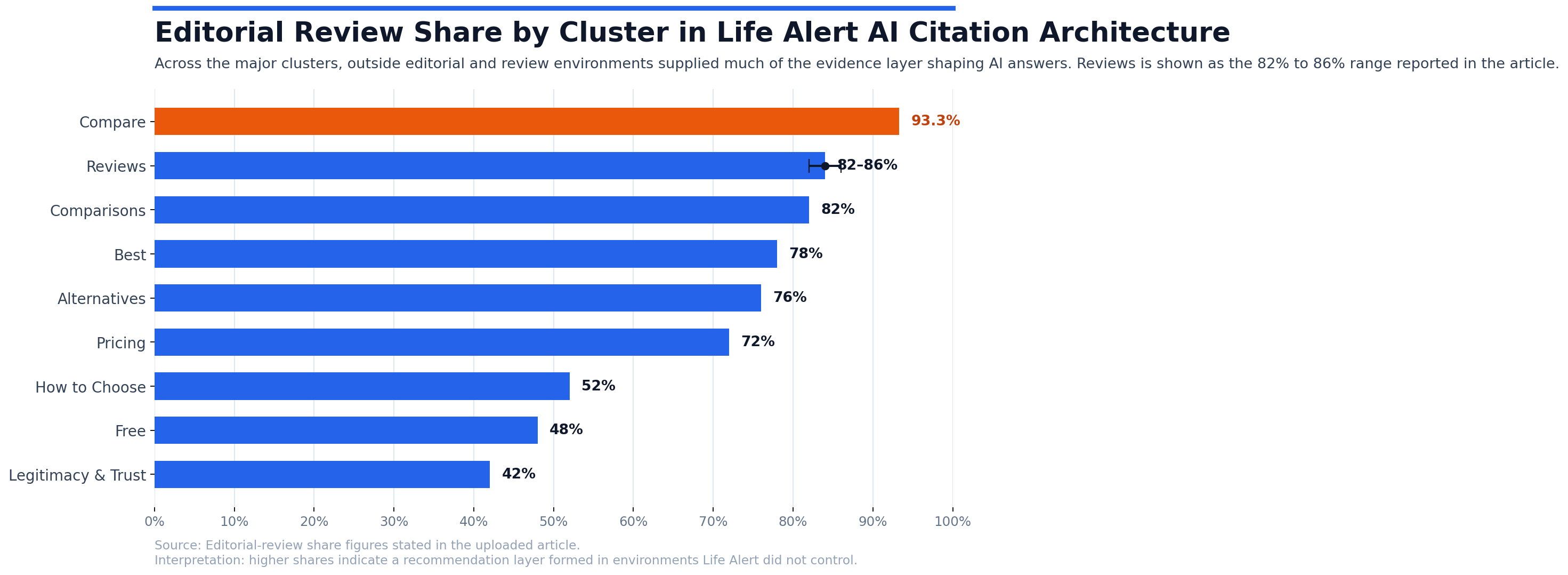

Editorial Review Share Was High in the Clusters That Mattered Most

The report's editorial-review shares show how dominant the external evidence layer was:

- Best: 0.78

- Comparisons: 0.82

- Pricing: 0.72

- Reviews: approximately 82% to 86%

- Alternatives: 0.76

- Compare: 93.3%

- How to Choose: 0.52

- Free: 0.48

- Legitimacy & Trust: 0.42

That distribution matters because it shows the recommendation layer was being formed in environments where Life Alert did not control the evidence. In plain language: the web was teaching the machines a story that Life Alert did not own.

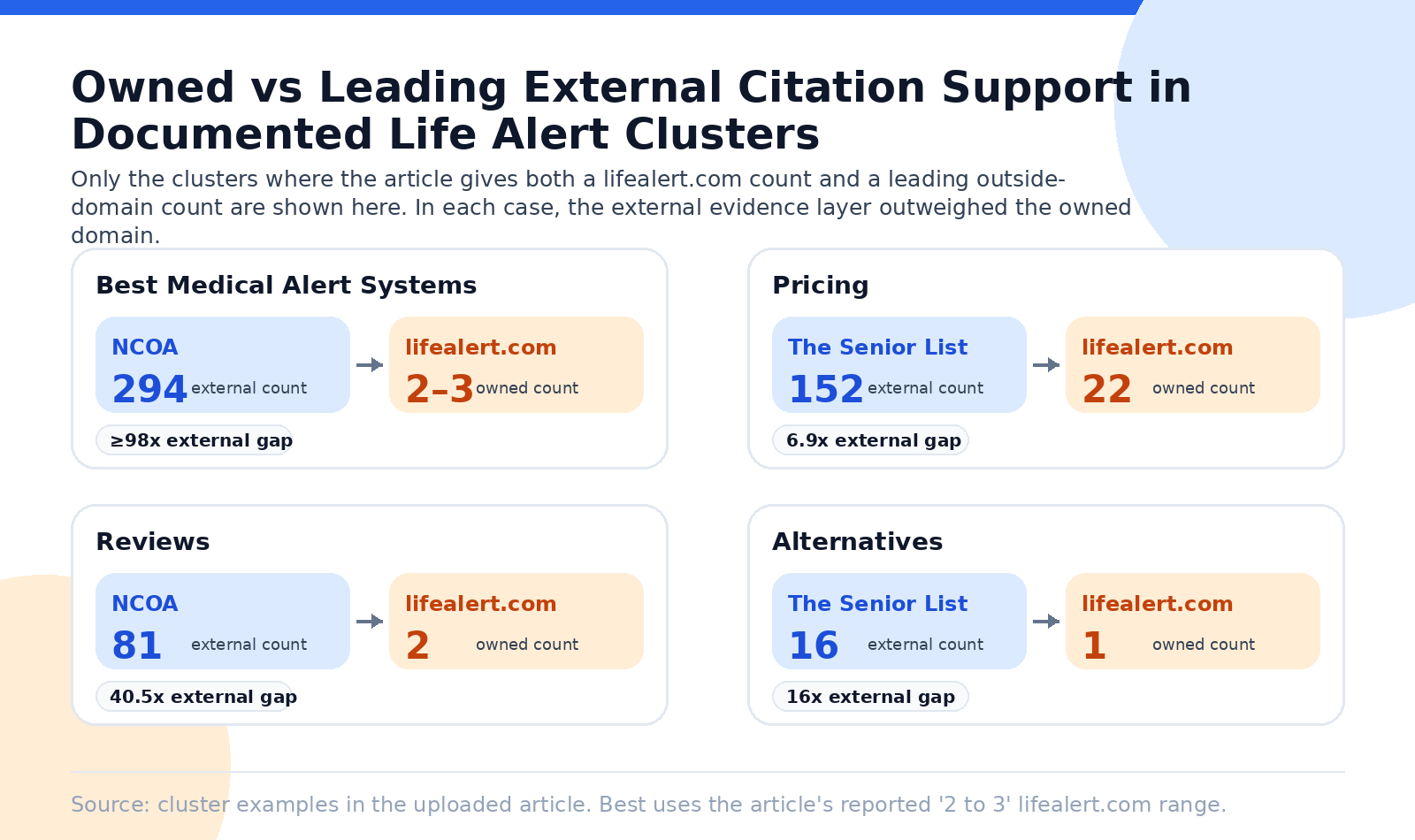

Best Medical Alert Systems: The Clearest Citation Failure

The strongest single example was Best Medical Alert Systems.

This cluster covered 259 prompts and 251,041 modeled queries. Life Alert appeared in only 14.2% of prompts and still captured 0.0% recommendation share, 0.0% Top 1, 0.0% Top 3, and 0.0% Top 10.

The citation layer was heavily concentrated in third-party authorities:

- NCOA: 294 estimated citations

- seniorliving.org: 119

- safehome.org: 118

- Forbes: 98

- The Senior List: 86

- U.S. News: 53

- SafeWise: 46

By contrast, lifealert.com appeared only 2 to 3 times. The report also noted that the cited NCOA review for Life Alert was unfavorable.

This is what a structurally hard citation environment looks like. The most influential external source was not merely more visible than the brand's own domain. It was dominant and not recommendation-supportive.

Comparisons: The Brand Entered the Conversation, But Not the Recommendation Layer

The Medical Alert System Comparisons (VS) cluster covered 63 prompts and 141,110 modeled queries. Life Alert appeared in 83% of prompts, which means the brand was very much in the consideration set.

But recommendation and ranking outcomes were still all zero.

The citation layer across all six LLMs was shaped by:

- theseniorlist.com

- retirementliving.com

- safehome.org

The report described lifealert.com as virtually absent from citations in this cluster.

That creates one of the strongest possible case-study insights for LLM Authority Index: a brand can dominate awareness inside a comparison prompt family and still lose the recommendation layer if the external comparison sources do not support it.

Pricing: The Biggest Demand Pool Was Also a Citation-Narrative Problem

The Pricing cluster was the largest in the baseline:

- 210 prompts

- 1,137,893 modeled queries

- 55.71% presence

- 0.0% recommendation share

- 0.0% ranking capture

Citations were led by:

- theseniorlist.com: 152

- seniorliving.org: 139

- safehome.org: 117

- ncoa.org: 102

Life Alert's own domain had an estimated 22 citations, which is not nothing. But the report explicitly described Life Alert's pricing content as non-transparent.

That distinction is important. This was not a case where Life Alert had no official-domain visibility at all. It had some. But the official-domain contribution was not recommendation-supportive, and it was still materially weaker than the editorial layer shaping the category narrative.

Reviews: Sparse Owned Support in a Decision-Stage Cluster

The Reviews cluster covered 58 prompts and 15,989 modeled queries. Life Alert appeared in 29.31% of prompts, but recommendation and ranking stayed at zero.

Citations were led by:

- NCOA: 81

- safehome.org: 34

- seniorliving.org: 31

- theseniorlist.com: 28

- U.S. News: 27

Life Alert had only:

- 2 citations from lifealert.com

- 3 U.S. News pages tied to its appearances

This is one of the simplest ways to explain the evidence layer problem to buyers or investors: in a review-led cluster, the brand's own domain was not missing entirely, but it was dramatically underweighted compared with the domains AI systems trusted for category explanation.

Alternatives: Full Presence, Almost No Citation Power

The Alternatives cluster is one of the most striking findings in the packet.

Life Alert had a 1.0 presence rate across 25 prompts and 207,612 modeled queries. In other words, it was always in scope when buyers explicitly asked for alternatives.

Yet recommendation and ranking capture remained 0.0%.

The citation layer was led by:

- theseniorlist.com: 16

- safewise.com: 14

- ncoa.org: 12

- Forbes: 10

- retirementliving.com: 9

lifealert.com was cited once.

This is a textbook example of a brand being visible as the problem statement rather than the solution.

Compare Medical Alert Systems: Some Support, No Endorsement

In Compare Medical Alert Systems, Life Alert appeared in roughly 27.27% of prompts across 22 prompts and 2,446 modeled queries.

The most cited domains were:

- ncoa.org: 16

- safehome.org: 9

- theseniorlist.com: 7

The packet explicitly noted that Life Alert did receive some cited support in that cluster, but those same sources did not position it as a top pick.

This is another useful case-study distinction:

- citation presence existed

- recommendation support did not

That is why the analysis must separate citation frequency from recommendation outcome.

How to Choose: Branded Support Without Organic Recommendation Strength

The How to Choose a Medical Alert System cluster covered 83 prompts and 178,448 modeled queries. Life Alert appeared in 28.9% of prompts, with 0.0% recommendation and ranking capture.

Two points stand out:

- Forbes and NCOA appeared on all six platforms

- caring.com appeared on five

Life Alert's own domain had 18 citations, but the report states these were primarily tied to brand-specific queries, not organic discovery prompts.

That is a critical nuance. A brand can appear reasonably often in its own branded prompt environment and still remain weak in the generic explanatory environments that shape buyer preference.

Free Medical Alert Systems: Affordability and Evidence Layers Collided

The Free Medical Alert Systems cluster covered 171 prompts and 343,722 modeled queries. Life Alert appeared in 41.52% of prompts but still recorded 0.0% recommendation and ranking capture.

The cluster was led by:

- caring.com: 107 citations across six LLMs

The report also noted that some competitor vendor domains had stronger disclosed support than Life Alert in this cluster, including:

- lifestation.com: 106 citations across five LLMs

- medicalert.org: 66 citations across five LLMs

The packet characterized owned-domain citations in this cluster as mostly reference points rather than recommendation grounds.

That makes Free one of the clearest examples of the difference between being discussed and being selected.

Legitimacy and Trust: Community and Trust Domains Defined the Answer Set

The Legitimacy & Trust cluster covered 28 prompts and 73,127 modeled queries. Life Alert had 25.0% presence and 0.0% recommendation and ranking capture.

Cluster-wide citations were led by:

- bbb.org: 13

- reddit.com: 12

- trustpilot.com: 10

- ncoa.org: 8

Life Alert's support was narrower and limited to fewer platforms. In the packet, cited support in trust contexts was limited mainly to Google AI Overviews and Gemini.

This matters because legitimacy prompts are not controlled by brand familiarity alone. They are controlled by the trust environments AI systems already use when they need to decide whether a company feels credible enough to recommend.

Owned-Domain Citations Were Real, But Too Narrow to Change the Outcome

The packet does not support a simplistic statement that Life Alert's own domain was absent everywhere. In some clusters, lifealert.com did appear.

But the pattern was narrow and structurally weak:

- Best: 2 to 3 appearances

- Pricing: about 22 citations

- Reviews: 2 citations

- Alternatives: 1 citation

- Features: 6 citations

- How to Choose: 18 citations

Why those citations failed to matter:

- They were often tied to brand-specific prompts rather than organic discovery.

- They were frequently reference-only, not recommendation-supportive.

- They were materially weaker than the editorial domains structuring the answer set.

- In some high-intent areas, the official-domain narrative itself was not helping. Pricing is the clearest example.

This is why citation architecture is not just about having citations. It is about having the right citations, in the right environments, with the right framing.

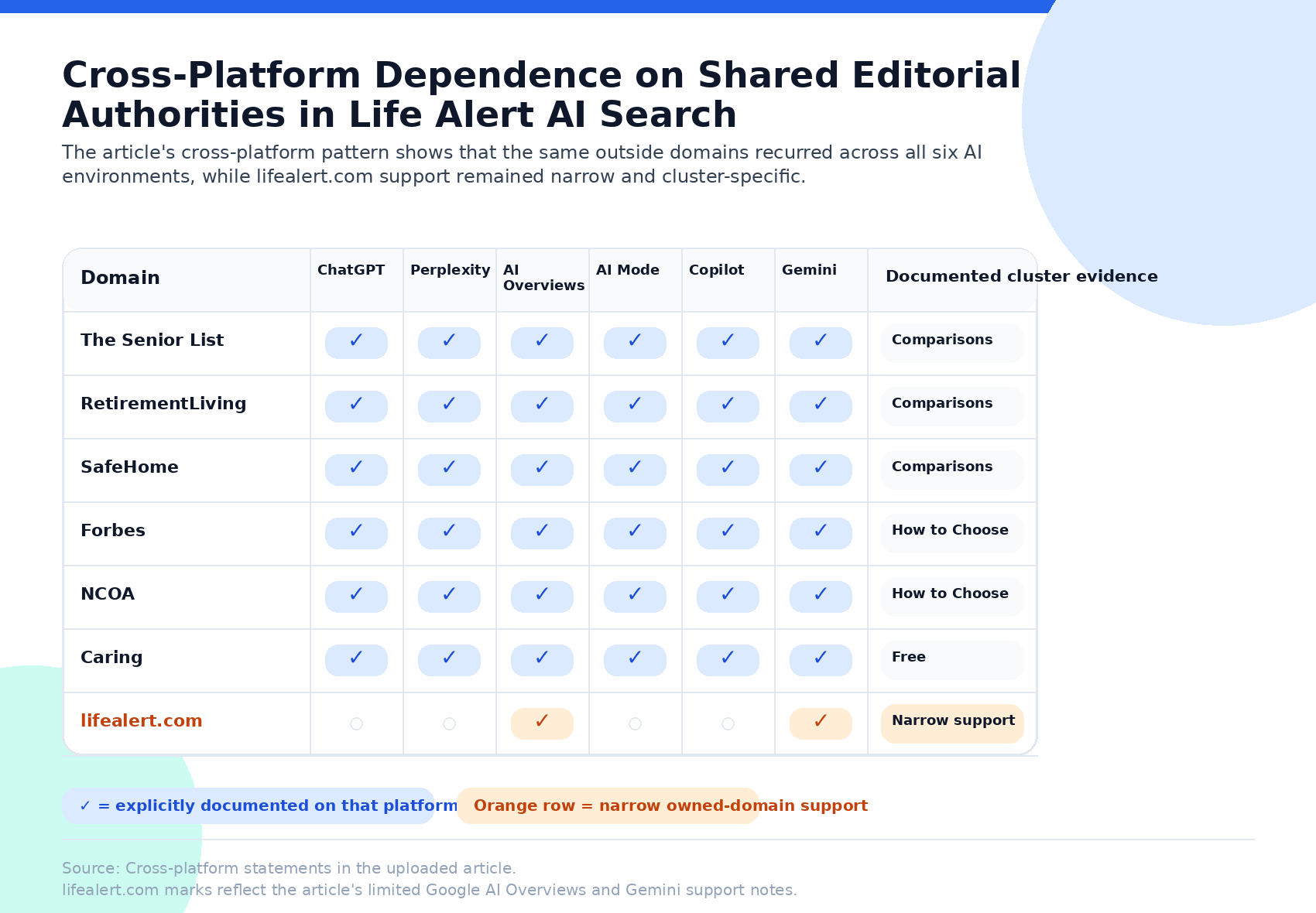

Cross-Platform Pattern: A Shared Evidence Problem, Not One Broken Platform

One of the strongest findings in the report is that this was not a platform-specific failure.

The same third-party domains recurred across multiple or all six LLMs:

- In Comparisons, theseniorlist.com, retirementliving.com, and safehome.org appeared across all six.

- In How to Choose, Forbes and NCOA appeared on all six.

- In Free, caring.com led citations across all six.

At the same time, Life Alert's own-domain support remained narrow:

- virtually absent in Comparisons

- cited once in Alternatives, only by Google AI Overviews

- only 2 citations in Reviews

- limited trust support in Google AI Overviews and Gemini

The implication is straightforward: this was a reusable external evidence problem, not a single-model anomaly.

Directional Competitor Pressure

The baseline report does not provide a verified total-market competitor league table, and it does not identify confirmed overall Top 1 or Top 3 leaders from the ranking packet.

That said, the packet directionally named a recurring competitor set more favorably positioned than Life Alert in at least some clusters:

- Medical Guardian

- Bay Alarm Medical

- MobileHelp

- LifeStation

- Philips Lifeline

- Lively

- LifeFone

- ADT Health

The strongest directional threats in the packet were tied to:

- best-of prompts

- pricing

- comparisons

- alternatives

- affordability and free-system contexts

A separate directional packet, built for lead-generation use rather than full baseline market-share reporting, went even further. It suggested that:

- five to seven editorial domains controlled 72% to 93% of all LLM citations in the category

- competitor domains were cited 3x to 14x more often than lifealert.com

- transparent pricing pages, dedicated comparison pages, financial-assistance content, and feature landing pages were helping competitors earn citation advantage

Those directional points are useful for interpretation, but the April 2026 1,026-prompt baseline remains the primary source of truth for this case study.

What This Case Study Shows

This case study shows five things clearly:

1. Citation architecture can overpower brand familiarity

Life Alert is a known brand. That did not make it recommendation-qualified.

2. The recommendation layer is built from external evidence

Editorial, nonprofit, review, comparison, and trust domains shaped the answer set more than Life Alert's own domain did.

3. Owned content can be present without being persuasive

Official-domain citations existed in some clusters, but they were often too sparse, too branded, or too reference-oriented to alter recommendation behavior.

4. High-intent demand was concentrated in exactly the wrong places

The biggest clusters were Pricing, Free, Best, Alternatives, and How to Choose. Those were also the places where the evidence layer was least supportive.

5. This was not a discovery problem

It was a recommendation-qualification problem rooted in the evidence layer.

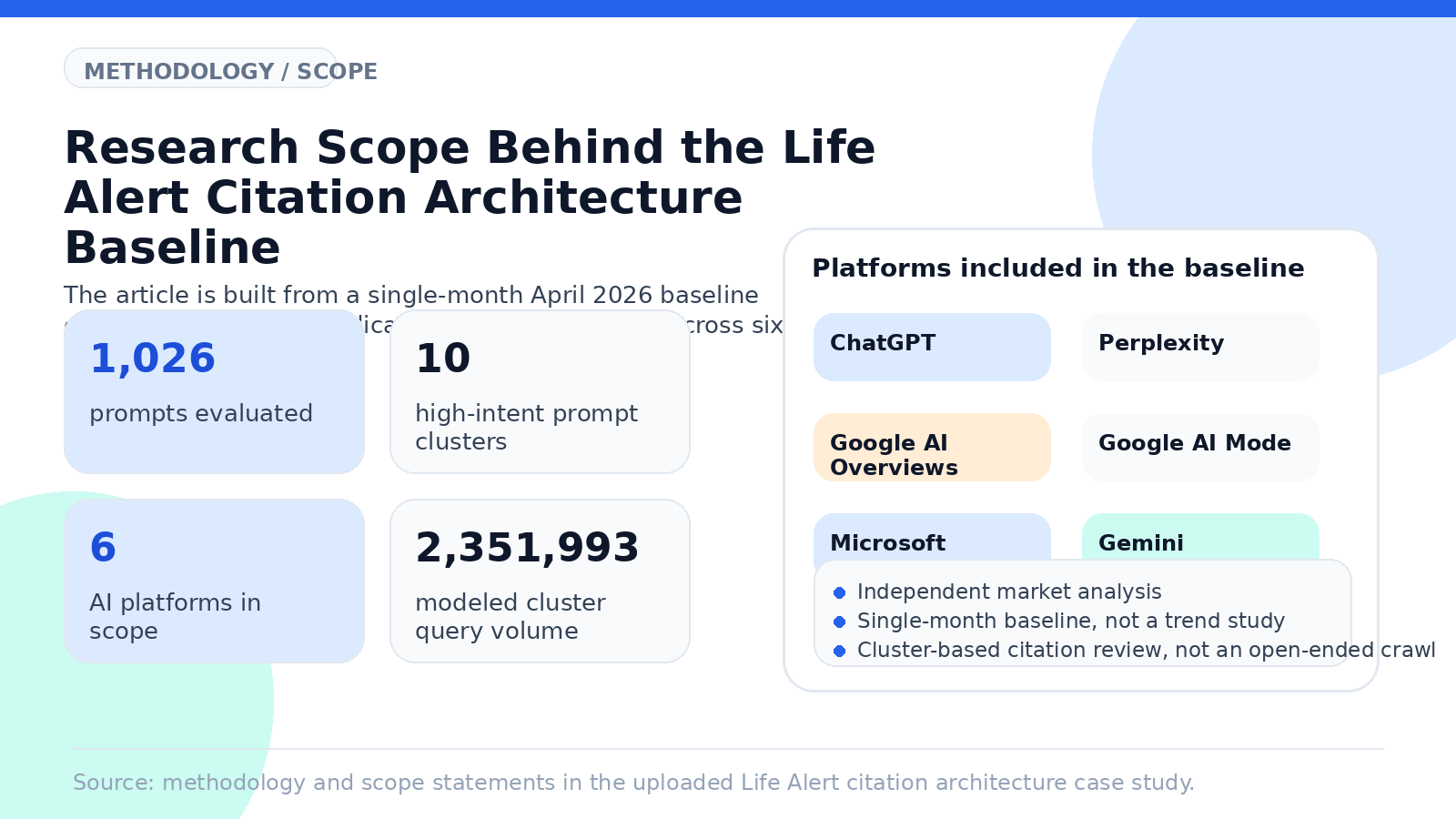

Methodology

Primary source of truth

This case study is based primarily on the April 2026 Life Alert baseline report, which covered:

- 1,026 prompts

- 10 high-intent prompt clusters

- 6 AI platforms

- 2,351,993 total modeled cluster query volume

The platforms in scope were:

- ChatGPT

- Perplexity

- Google AI Overviews

- Google AI Mode

- Microsoft Copilot

- Gemini

How the citation analysis worked

The report's citation analysis examined which domains and source types AI platforms relied on when constructing answers in each cluster. That included official vendor domains, editorial review sites, nonprofit or trust-oriented sources, comparison pages, community forums, and other referenced content.

The citation review was cluster-based, not an open-ended crawl of the entire web.

Metric integrity

The report explicitly separates:

- presence rate from ranking share

- recommendation share from ranking share

- citation frequency from endorsement

- cluster-level outputs from total-market outputs

- directional exposure from realized revenue

That separation is part of the methodology, not a caveat added afterward.

Limitations

This is a single-month baseline, not a trend study.

Other important limitations:

- the report is company-specific to Life Alert

- competitors are discussed directionally unless the packet directly supports more

- the packet does not provide a total-market competitor SOV table

- the packet does not establish verified overall Top 1 or Top 3 leaders across the market

- economic interpretation is directional because modeled commercial value fields in the baseline are zeroed out

- citation evidence was synthesized from packet rollups and is not a legal or reputational review

A separate 919-observation directional packet exists and is useful for interpretation, but it was designed for lead-generation use, not as the primary market-share baseline. Where the baseline and supplemental packet differ in scope, the baseline controls this case study.

Frequently Asked Questions

What is citation architecture in AI search?

Citation architecture is the mix of owned and third-party sources AI systems rely on when they explain, compare, and recommend brands.

Was Life Alert invisible in AI search?

No. Life Alert appeared in 51.6% of evaluated prompts. The problem was not visibility alone. The problem was failure to convert visibility into recommendation and ranking capture.

Why didn't lifealert.com citations fix the problem?

Because citation frequency alone does not equal endorsement. In several clusters, Life Alert's own-domain citations were too sparse, too narrow, too brand-specific, or not recommendation-supportive.

Which source environments mattered most?

Editorial review, nonprofit guidance, comparison publishers, and trust environments mattered most. Recurring examples included NCOA, Forbes, SeniorLiving, SafeHome, The Senior List, U.S. News, Caring, BBB, Reddit, and Trustpilot.

What does this case study not claim?

It does not claim audited market-wide competitor share tables. It does not claim realized revenue loss. It does not claim that citation volume alone proves recommendation strength.

Final Thoughts

Get your free report to see how LLM Authority Index separates presence, recommendation share, ranking capture, citation architecture, and recoverability across AI buying journeys.

Keep reading

Related case studies

Case Study

The Visibility Trap: How AI Share of Voice Made a Brand Look Like It Was Winning While AI Was Sending Buyers Elsewhere

Methodology case study: share-of-voice–style scoring suggested strength in comparison and pricing, while sentiment-gated recommendation analysis showed 0% capture and cautionary framing—why the strategic diagnosis inverted.

ReadCase Study

Life Alert Pricing in AI Search: The Biggest Demand Cluster, Zero Recommendation Capture

In the April 2026 baseline, Pricing was Life Alert's largest AI buying-moment cluster: 1,137,893 modeled queries and 55.71% presence, but 0.0% AI recommendation share and 0.0% ranked capture.

ReadCase Study

Life Alert in AI Search: Visible, but Not Recommendation-Qualified

Life Alert entered AI-mediated consideration, but not AI-mediated preference. In the April 2026 LLM Authority Index baseline, the brand appeared in just over half of measured AI responses across six major platforms. That confirms broad recognition. But the outcome layer was absent: no measurable recommendation share, no measurable Top 1, Top 3, or Top 10 capture, and no measurable conversion from mention into ranked inclusion. This was not a discovery failure. It was a recommendation-qualification failure.

Read